Improving Productivity with AI Engine Kernel Programming and Vitis AI Engine API

Introduction

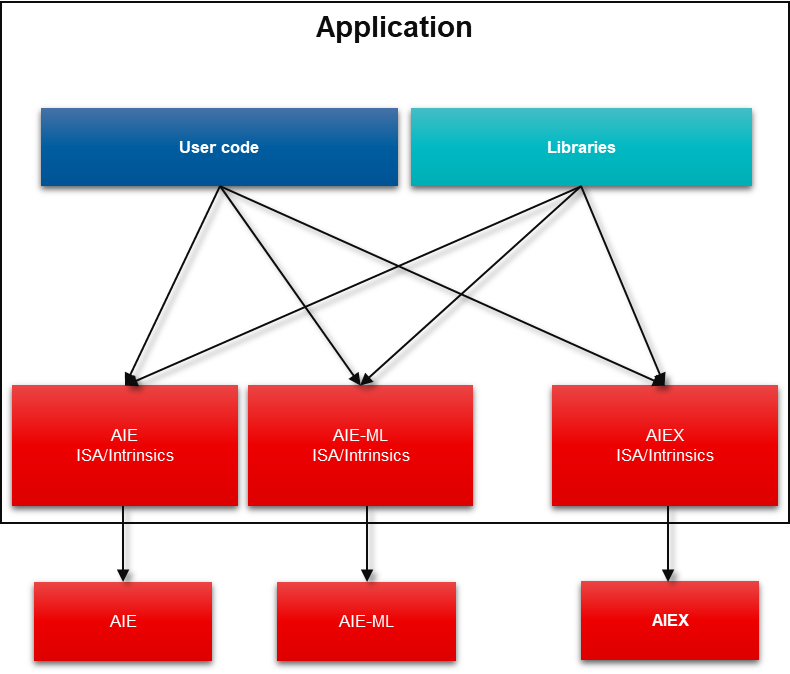

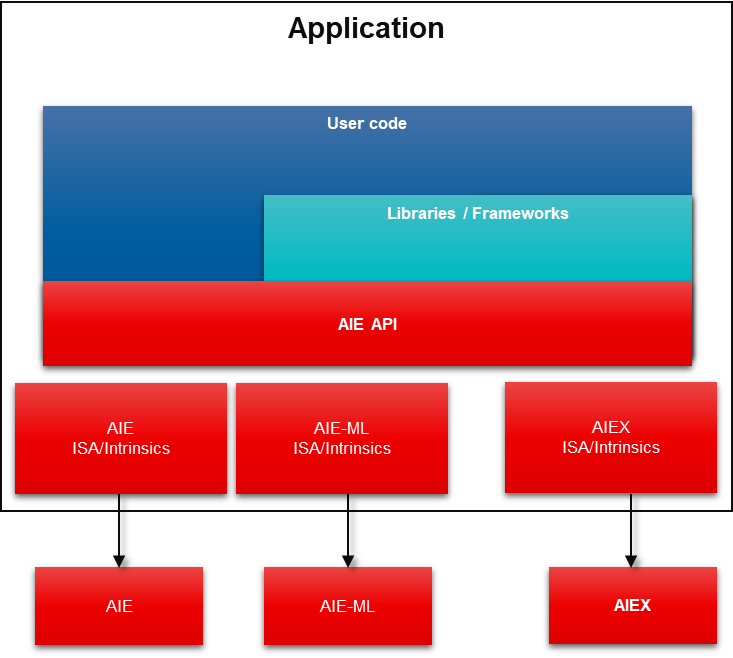

This article introduces developing AI Engine Kernels with Vitis 2021.2 AI Engine high-level abstraction API, improving your design productivity significantly! The AI Engine API is a higher-level abstraction C++ API implemented as a C++ header-only library that provides types and operations that get transparently translated into efficient optimized low-level AI Engine intrinsics. It improves portability across different AI Engine architectures. AI Engine API is the lead method of AI Engine kernel programming.

AI Engine Intrinsic

The AI Engine Intrinsics is a flexible ISA based on AI Engine kernel programming. It implements a wide range of vector operations enabling efficient implementations of many types of primitives

Most functionality is only accessible via intrinsics (~10K intrinsics for 1st Gen). However, intrinsics introduces programmability challenges:

- Understand low-level details of AI Engine architecture

- Reimplement for various data types

- No source portability at the intrinsic level

- Architectural changes required for different AI Engine architectures

AI Engine API

Vitis AI Engine APIs are higher-level abstraction C++ API, implemented as a C++ header-only library that provides types and operations that get translated into efficient AI Engine intrinsics. It provides parametrizable data types that enable generic programming to implement the most common operations in a uniform way. For different data types, it transparently translates higher-level primitives into optimized low-level intrinsics. AI Engine API is a portable programming interface for AI Engine accelerators. It is implemented as a C++ header-only library that provides types and operations that get translated into efficient low-level intrinsics. So it improves portability across AI Engine architectures, like AI Engine, AIE-ML and other future AI Engine architectures.

The AIE API will be the primary programming model for kernel programming. Xilinx collateral/examples will be based on the AIE API. Meanwhile, Xilinx will continue to support and maintain the Intrinsics.

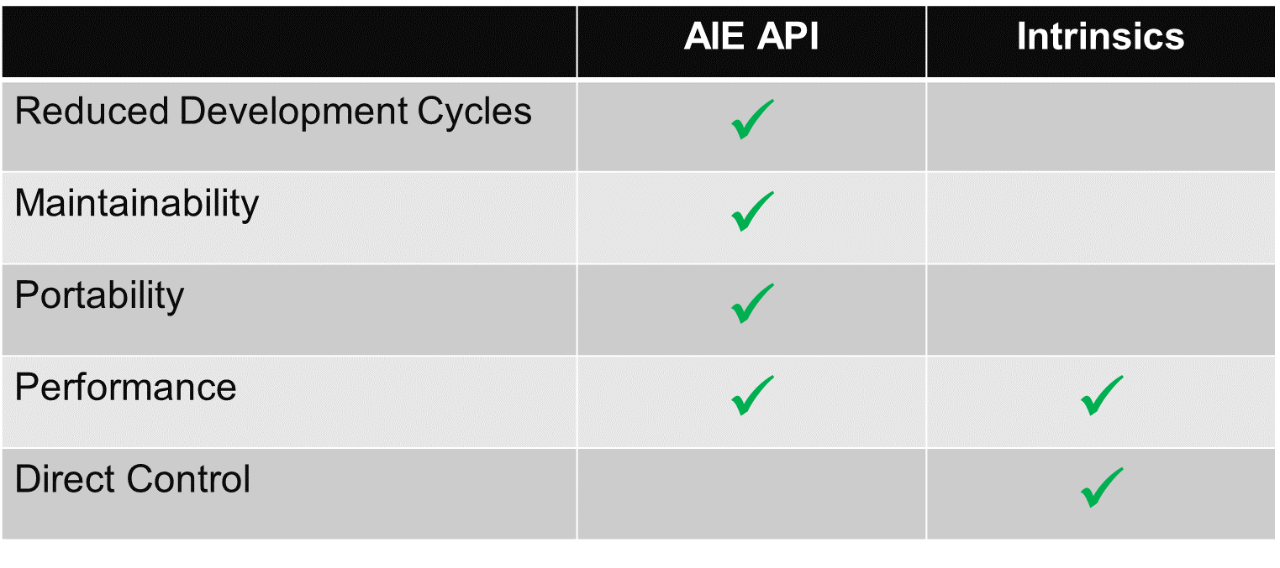

AI Engine API vs AI Engine Intrinsics

AIE API Provides an easier way to use kernel programming models through higher-level abstraction that requires reduced knowledge of the underlying hardware to achieve optimized performance.

How to use AI Engine API in Vitis 2021.2

The AI Engine API is included with Vitis 2021.2. with 4 simple steps:

- Declare the AI Engine API at the top of their AI Engine kernel Code. include the below headers in your applications. #include <aie_api/aie.hpp>

- Write AI Engine kernel code using the AI Engine API.

- There are no changes to graph coding.

- Compile simulates AI Engine kernel code using the standard AI Engine tools.

Examples

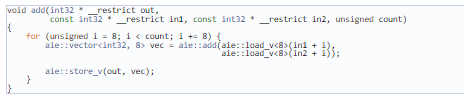

AIE API provides a set of functions that implement arithmetic operations on vector types. Operands may be vectors, values or vector element references and the supported operand combinations are:

Vector / Vector: the type and the size of the vectors must match. The operation is performed element-wise between the corresponding elements in each vector.

Value / Vector: the type of the value and the type of the elements of the vector must match. The operation has the same result as if the value was broadcast to a vector and then operated with the vector argument.

Vector element reference / Vector. Similar as Value / Vector, but using an element reference, the AIE API may optimize the operation by accessing the element directly from its original location.

The following code snippet shows an example that adds two input arrays into an output array using vectors. For simplicity, the count must be divisible by 8.

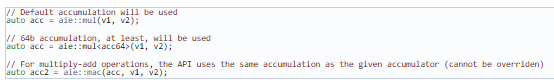

Operations that include a multiplication return an accumulator. The API defines a default accumulation for each combination of types, but users may specify a larger number of accumulation bits by explicitly passing an accumulator tag.

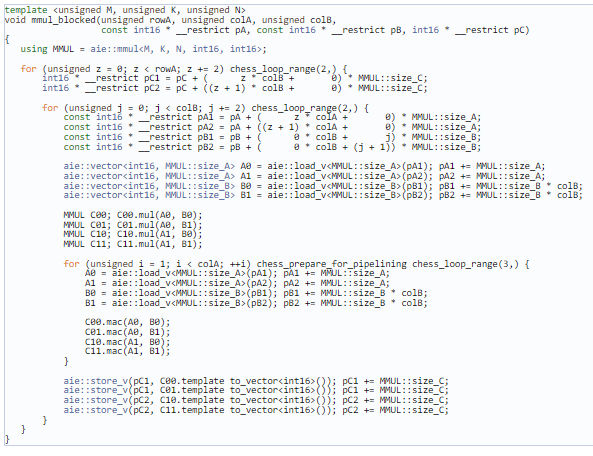

The AIE API encapsulates the matrix multiplication functionality in the aie::mmul class template. This class template is parametrized with the matrix multiplication shape (MxKxN), the data types, and, optionally, the requested accumulation precision. The resulting class defines a function that performs the multiplication and a data type for the result that can be converted to an accumulator/vector. The function interprets the input vectors as matrices as described by the shape parameters.

The following code snippet shows a sample blocked multiplication using the aie::mmul class. The matrices are assumed to be pre-tiled as defined by the mmul shape (MxK for A, KxN for B, and MxN for C).

AI Engine API Documentation

About George Wang

George Wang is Sr. product marketing manager for Vitis SW in AMD Software & AI marketing team. George has his Masters in the Digital Signal Processing and his Bachelors in the Science of Electrical Engineering. George joined AMD in 2005, has 17 years’ experience in the DSP, FPGA design. Also has expertise in heterogenous computing, software acceleration with AMD FPGA/SoC//ACAP devices.

See all George Wang's articles