- Technology

- AI Engine Technology

AMD AI Engine Technology

Increased Compute Density and Silicon Efficiency for Heterogeneous Acceleration

AI Engine: Meeting the Compute Demands of Next-Generation Applications

In many dynamic and evolving markets, such as 5G cellular, data center, automotive, and industrial, applications are pushing for ever increasing compute acceleration while remaining power efficient. With Moore's Law and Dennard Scaling no longer following their traditional trajectory, moving to the next-generation silicon node alone cannot deliver the benefits of lower power and cost with better performance, as in previous generations.

Responding to this non-linear increase in demand by next-generation applications, like wireless beamforming and machine learning inference, AMD has developed a new innovative processing technology, the AI Engine, as part of the Versal™ Adaptive Compute Acceleration Platform (ACAP) architecture.

AI Engine Architecture

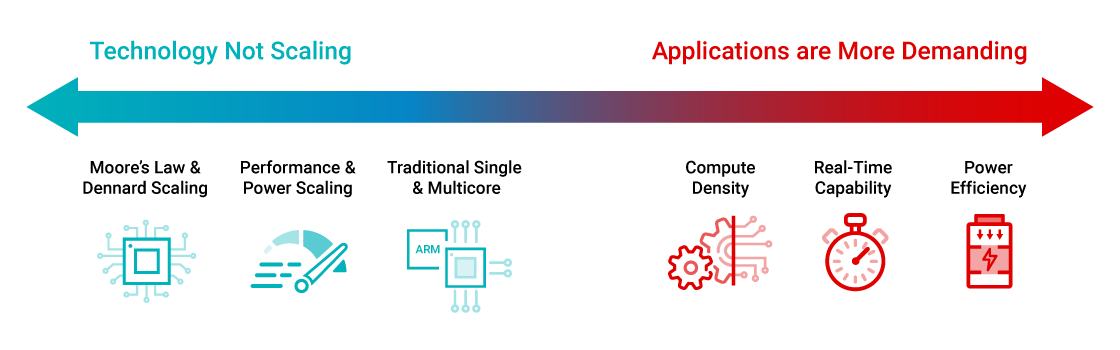

AI Engines are architected as 2D arrays consisting of multiple AI Engine tiles and allow for a very scalable solution across the Versal portfolio, ranging from 10s to 100s of AI Engines in a single device, servicing the compute needs of a breadth of applications. Benefits include:

Software Programmability

- C programmable, compile in minutes

- Library-based design for ML framework developers

Deterministic

- Dedicated instruction and data memories

- Dedicated connectivity paired with DMA engines for scheduled data movement using connectivity between AI Engine tiles

Efficiency

- Delivers up to 8X better silicon area compute density when compared with traditional programmable logic DSP and ML implementations, reducing power consumption by nominally 40%

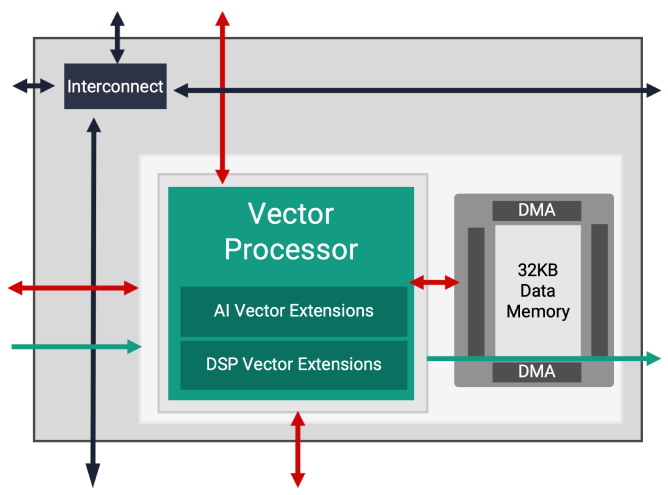

AI Engine Tile

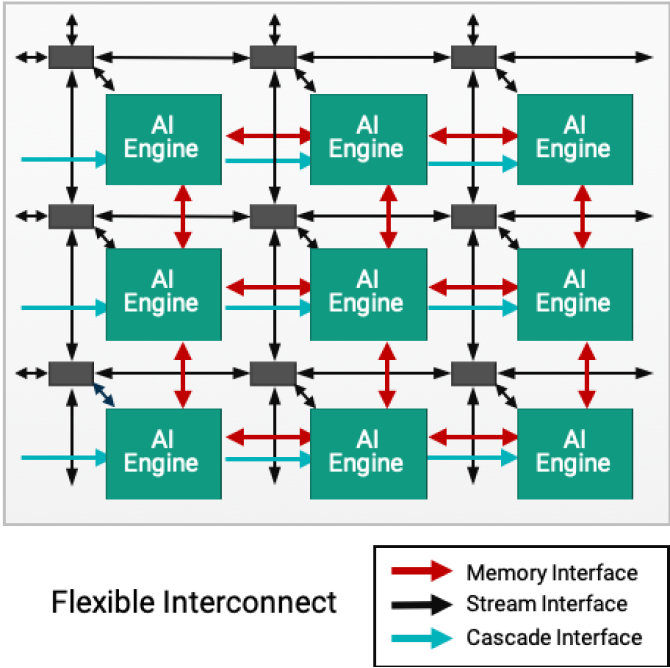

Each AI Engine tile consists of a VLIW, (Very Long Instruction Word), SIMD, (Single Instruction Multiple Data) vector processor optimized for machine learning and advanced signal processing applications. The AI Engine processor can run up to 1.3GHz enabling very efficient, high throughput and low latency functions.

As well as the VLIW Vector processor, each tile contains program memory to store the necessary instructions; local data memory for storing data, weights, activations and coefficients; a RISC scalar processor and different modes of interconnect to handle different types of data communication.

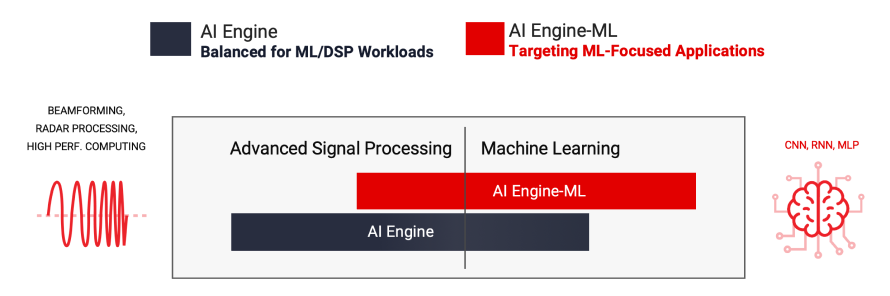

Heterogeneous Workloads: Signal Processing and Machine Learning Inference Acceleration

AMD offers two types of AI Engines: AIE and AIE-ML (AI Engine for Machine Learning), both offering significant performance improvements over previous generation FPGAs. AIE accelerates a more balanced set of workloads including ML Inference applications and advanced signal processing workloads like beamforming, radar, and other workloads requiring a massive amount of filtering and transforms. With enhanced AI vector extensions and the introduction of shared memory tiles within the AI Engine array, AIE-ML offers superior performance over AIE for ML inference focused applications, whereas AIE can offer better performance over AIE-ML for certain types of advanced signal processing.

AI Engine Tile

AIE accelerates a balanced set of workloads including ML Inference applications and advanced signal processing workloads like beamforming, radar, FFTs, and filters.

Support for many workloads/applications

- Advanced DSP for communications

- Video and image processing

- Machine Learning inference

Native support for real, complex, floating point data types

- INT8/16 fixed point

- CINT16, CINT32 complex fixed point

- FP32 floating data point

Dedicated HW features for FFT and FIR implementations

- 128 INT8 MACs per tile

See Versal ACAP AI Engine Architecture Manual to learn more.

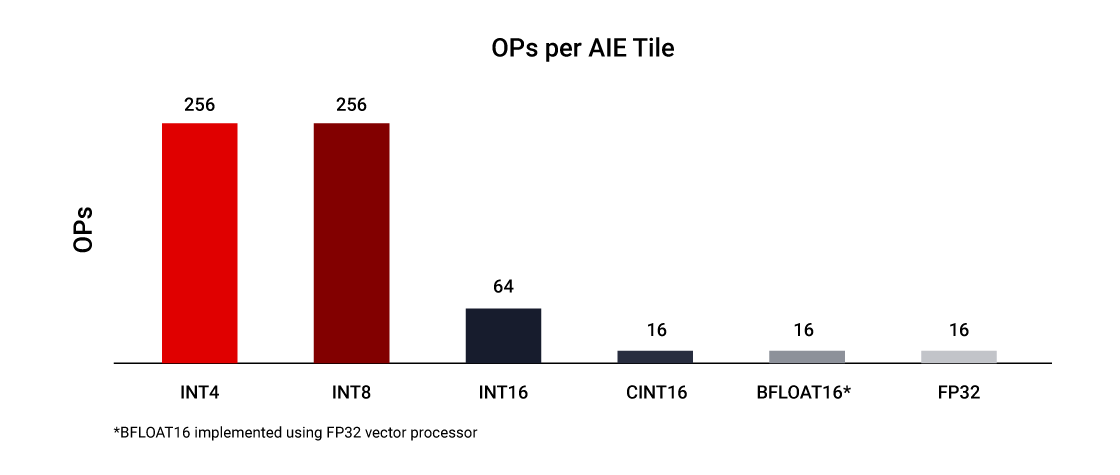

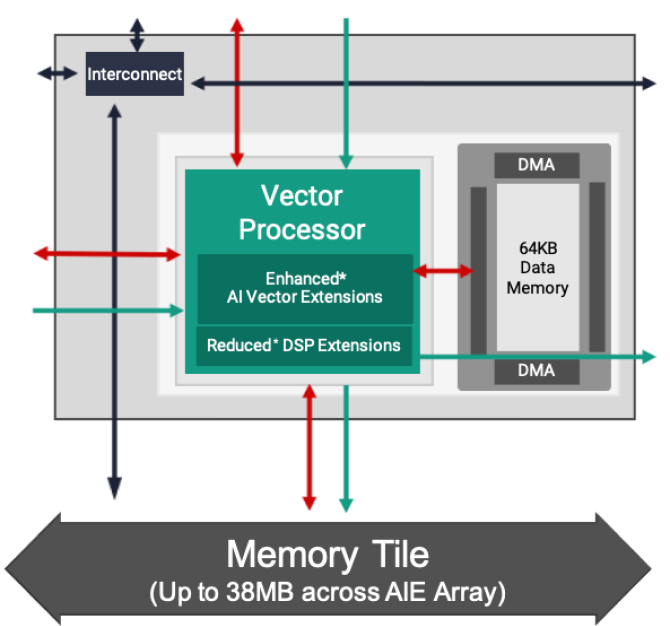

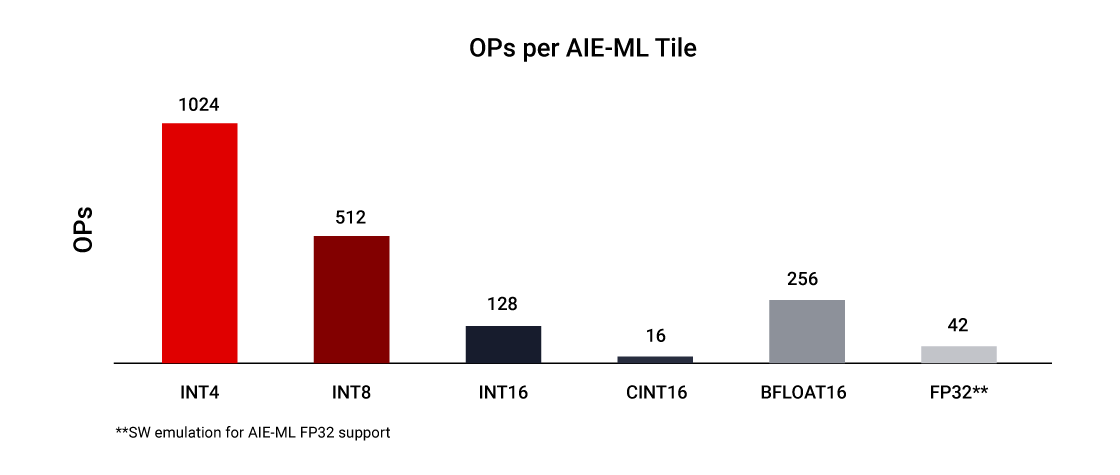

AI Engine-ML Tile

The AI Engine-ML architecture is optimized for machine learning, enhancing both the compute core and memory architecture. Capable of both ML and advanced signal processing, these optimized tiles de-emphasize INT32 and CINT32 support (common in radar processing) to enhance ML-focused applications.

Extended native support for ML data types

- INT4

- BFLOAT16

2X ML compute with reduced latency

- 512 INT4 MACs per tile

- 256 INT8 MAC per tile

Increased array memory to localize data

- Doubled local data memory per tile (64kB)

- New memory tiles (512kB) for high B/W shared memory access

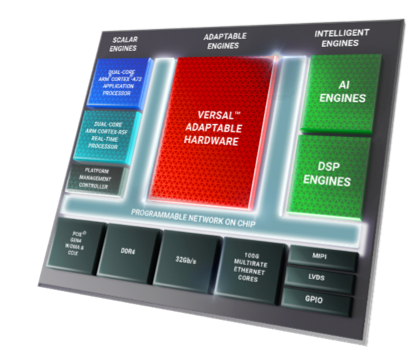

Part of a Heterogeneous Platform

The AI Engine along with Adaptable Engines (programmable logic) and Scalar Engines (processor subsystem) form a tightly integrated heterogeneous architecture on Versal Adaptive Compute Acceleration Platforms (ACAPs) that can be changed at both the hardware and software levels to dynamically adapt to the needs of a wide range of applications and workloads.

Built from the ground up to be natively software programmable, the Versal ACAP architecture features a flexible, multi-terabit per-second programmable network on chip (NoC) to seamlessly integrate all engines and key interfaces, making the platform available at boot and easily programmed by software developers, data scientists, and hardware developers alike.

Available in Versal Portfolio

AI Engine and AI Engine-ML architectures are available in both Versal AI Core and Versal AI Edge devices.

Versal AI Core Series

Versal AI Core series delivers breakthrough AI inference and wireless acceleration with AI Engines that deliver over 100X greater compute performance than today’s server-class CPUs. Featuring the highest compute in the Versal portfolio, applications for Versal AI Core ACAPs include data center compute, wireless beamforming, video and image processing, and wireless test equipment.

Versal AI Edge Series

Versal AI Edge series delivers 4X AI performance/watt vs. leading GPUs for power and thermally constrained environments at edge nodes. Accelerating the whole application from sensor to AI to real-time control, the Versal AI Edge series offers the world’s most scalable portfolio in its class, from intelligent sensor to edge compute, along with hardware adaptability to evolve with AI innovations in real-time systems.

AI Engines for Heterogeneous Workloads Ranging from Wireless Processing to Machine Learning in the Cloud, Network, and Edge

Data Center Compute

Analysis of images and video are central to the explosion of data in the data center. The convolutional neural network (CNN) nature of the workloads requires intense amounts of computation – often reaching multiple TeraOPS. AI Engines have been optimized to efficiently deliver this computational density cost effectively and power efficiently.

5G Wireless Processing

5G can provide unprecedented throughput at extremely low latency, necessitating a significant increase in signal processing. The AI Engines can execute this real-time signal processing in the radio unit (RU) and distributed unit (DU) at lower power, such as sophisticated beamforming techniques used in massive MIMO panels to increase network capacity.

ADAS and Automated Drive

CNNs are a class of deep, feed-forward artificial neural networks most commonly applied to analyzing visual imagery. CNNs have become essential as computers are being used for everything from autonomous driving vehicles to video surveillance. The AI Engines provide the necessary compute density and efficiency required for small form factors with tight thermal envelopes.

Aerospace & Defense

Merging powerful vector-based DSP Engines with AI Engines in a small form factor enables a breadth of systems in A&D, including phased array radar, early warning (EW), MILCOM, and unmanned vehicles. Supporting heterogeneous workloads ranging from signal processing, signal conditioning, and AI inference for multi-mission payloads, AI Engines deliver the compute efficiency to meet the aggressive size, weight, and power (SWaP) requirements of these mission-critical systems.

Industrial

Industrial applications including robotics and machine vision combine sensor fusion with AI/ML to perform data processing at the edge and near the source of information. AI Engines improve performance and dependability in these real-time systems, despite the uncertainty of the environment.

Wireless Test Equipment

Real-time DSP is used extensively in wireless communications test equipment. The AI Engine architecture is well-suited to handle all types of protocol implementations, including 5G from the digital front-end to beamforming and baseband.

Healthcare

Healthcare applications leveraging AI Engines include high-performance parallel beamformers for medical ultrasound, back projection for CT scanners, offloading of image reconstruction in MRI machines, and assisted diagnosis in a variety of clinical and diagnostic applications.

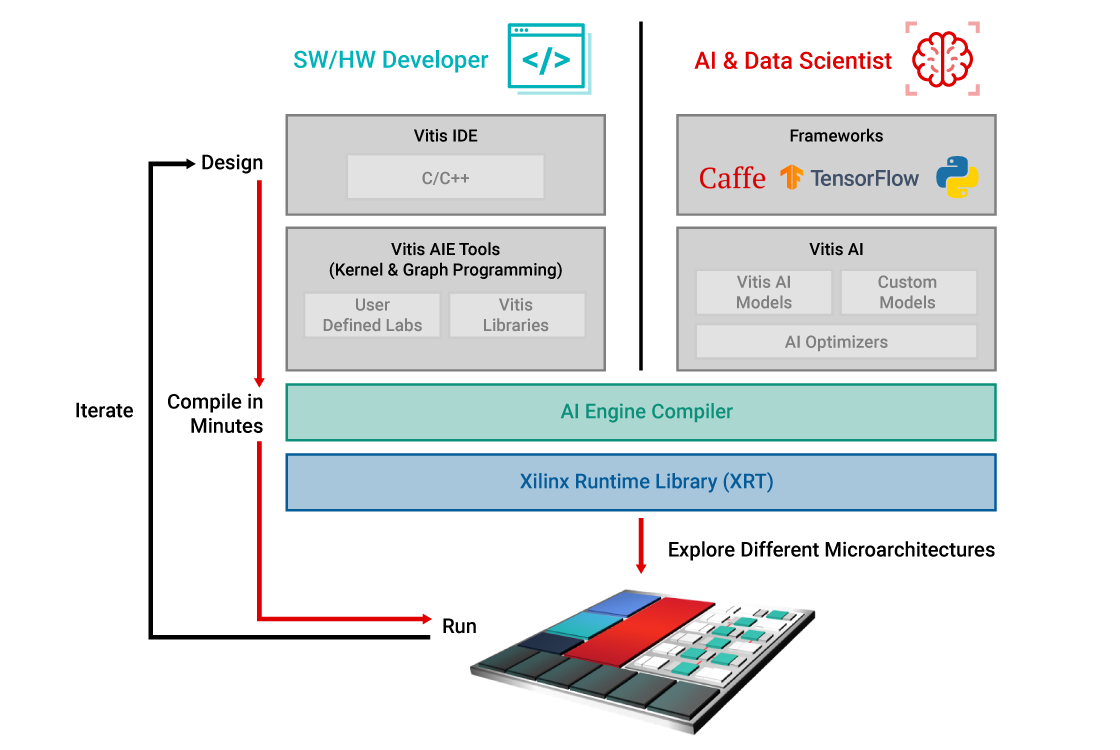

AI Engine Development Flows

AI Engines are built from the ground up to be software programmable and hardware adaptable. There are two distinct design flows for any developer to unleash the performance of these compute engines with the ability to compile in minutes and rapidly explore different microarchitectures. The two design flows consist of:

- Vitis™ IDE for a C/C++ style programming, suited for software and hardware developers

- Vitis AI for an AI/ML framework-based flow targeting AI and data scientists

AI Engine Libraries for Software/Hardware Developers and Data Scientists

AMD delivers, by way of the Vitis Acceleration library, pre-built kernels that enable:

- Shorter development cycles

- Portability across AI Engine architectures, e.g., AIE to AIE-ML

- Quick learning and adoption of AI Engine technology

- The ability for designers to focus on their own proprietary algorithms

The software and hardware developers directly program the vector processor-based AI Engines and can call on pre-built libraries with C/C++ code where appropriate.

DSP

Linear Algebra

Communications

ML Lib

BLAS

Vision & Image

Data Movers

The AI data scientist stays in his or her familiar framework environment, such as PyTorch and TensorFlow, and calls pre-built ML overlays by way of Vitis AI, without having to directly program the AI Engines.

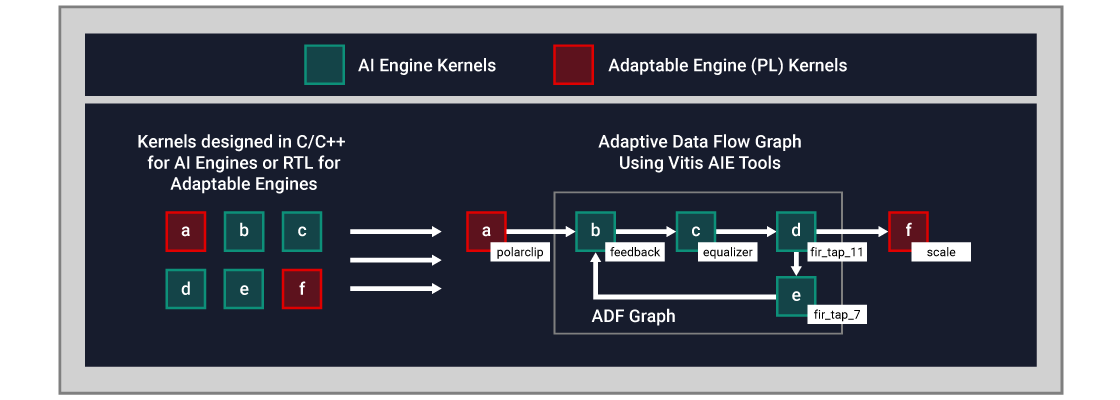

Data Flow Programming for the Software/Hardware Developer

The AI Engine architecture is based on a data flow technology. The processing elements come in arrays of 10 to 100 tiles–creating a single program across compute units. For a designer to embed directives to specify the parallelism across tiles is tedious and nearly impossible. To overcome this difficulty, AI Engine design is performed in two stages, single kernel development followed by via Adaptive Data Flow (ADF) graph creation, connecting the kernels into the overall application.

Vitis provides a single IDE cockpit that enables AI Engine single kernels using C/C++ programming code and ADF graph design. Specifically, the designers can:

- Develop kernels in C/C++ and with Vitis libraries, describing specific compute functions

- Connect kernels via Adaptive Data Flow graphs (ADF) via Vitis AI Engine tools

A single kernel runs on a single AI Engine tile by default. However, multiple kernels can run on the same AI Engine tile, sharing the processing time where the application allows.

A conceptual example is shown below:

- AI Engine kernels are developed in C/C++

- Kernels in adaptable engines, or Programmable Logic (PL), are written in RTL or Vitis HLS (High Level Synthesis)

- A data flow between kernels in both engines is performed via an ADF graph

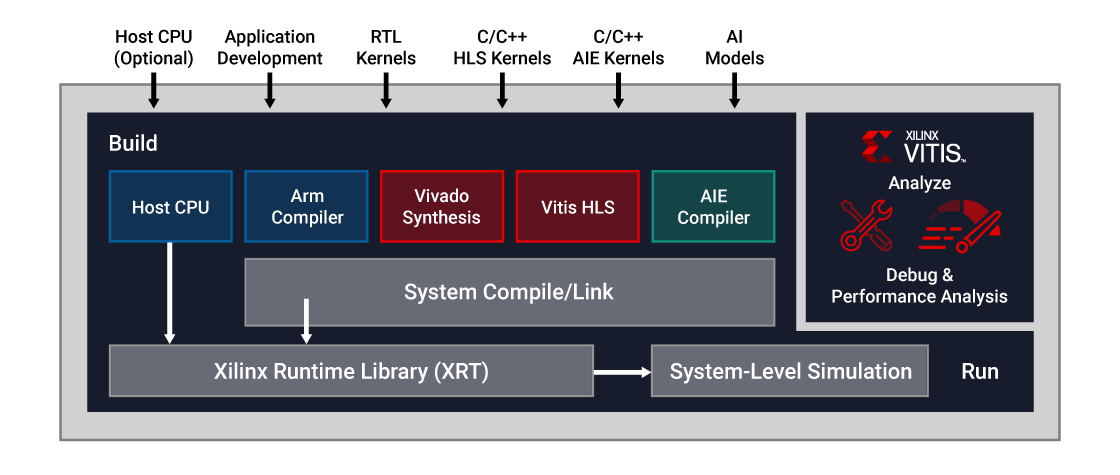

Integrating the AI Engine Design into a Complete System

Within the Vitis IDE, the AI Engine design can be included into the larger complete system, combining all aspects of the design into a unified flow where simulation, hardware emulation, debug, and deployment are possible.

- Dedicated compilers target different heterogeneous engines of the Versal platform, including the Scalar Engines (Arm® subsystem), Adaptable Engines (programmable logic) and Intelligent Engines (both DSP and AI Engines).

- A system compiler then links these individual blocks of code together and creates all the interconnections for optimizing the data movement between them and the custom memory hierarchies. The tool suite also integrates the x86 toolchain for PCIe® based systems.

- To deploy your application, Xilinx Runtime software (XRT) provides platform-independent and OS-independent APIs for managing the device configuration, memory and host-to-device data transfers, and accelerator execution.

- Once you have assembled your first prototype, you can simulate your application using a fast transaction level simulator or a cycle accurate simulator and use the performance analyzer to optimize your application for best partitioning and performance.

- When you are happy with the results, you can deploy on the Versal platform.

Download Vitis Unified Software Platform

AMD Vitis™ unified software platform provides comprehensive core development kits and libraries that use hardware-acceleration technology. Download Vitis Unified Software Platform >

Visit Vitis GitHub and AI Engine Development pages to see the breadth of AI Engine tutorials, which will help you to learn about the technology features and design methodology.

AI Engine tools, both compiler and simulator, are integrated within the Vitis IDE and require an additional dedicated license. Contact your local AMD sales representative for more information on how to access the AI Engine tools and license or visit the Contact Sales form.

Download Vitis Model Composer

AMD Vitis Model Composer is a model-based design tool that enables rapid design exploration within the Simulink® and MATLAB® environments. It facilitates AI Engine ADF graph development and testing at the system level, allowing the user to incorporate RTL and HLS blocks with AI Engine kernels and/or graphs in the same simulation. Leveraging the signal generation and visualization features within Simulink and MATLAB enables the DSP engineer to design and debug in a familiar environment. To learn how to use Versal AI Engines with Vitis Model Composer, visit the AI Engine resource page.

Purchase Evaluation Kit or Deployment Platform

Based on the Versal™ AI Core series, the VCK190 kit enables designers to develop solutions using AI Engines and DSP Engines capable of delivering over 100X greater compute performance than today's server-class CPUs. The evaluation kit has everything you need to jump-start your designs.

Learn more about the Versal AI Core series VCK190 evaluation kit >

Also available is the PCIe-based VCK5000 development card featuring Versal AI Core devices with AI Engines, built for high throughput AI inference in the data center.

Training Courses

AMD training and learning resources provide the practical skills and fundamental knowledge you need to be fully productive in your next Versal ACAP development project. Courses include:

- Getting Started with the AMD Versal ACAP Platform

- Designing with the Versal ACAP: Architecture and Methodology

- Designing with the Versal ACAP: Programmable Network on Chip

- Designing with Versal AI Engine 1 - Architecture and Design Flow

- Designing with Versal AI Engine 2 - Graph Programming with AI Engine Kernels

- Designing with Versal AI Engine 3 – Kernel Programming and Optimization

Versal ACAP Design Hub

From solution planning to system integration and validation, AMD provides tailored views of the extensive list of Versal ACAP documentation to maximize the productivity of user designs. Visit the Versal ACAP design hub to get the latest content for your design needs and explore AI Engine capabilities and design methodologies.

Adaptive Computing YouTube Channel

Visit the Adaptive Computing Developer YouTube channel for developer-to-developer content, where you’ll find AI Engine videos and tutorials, including the AI Engine A-to-Z Series.

Blog Series - AI Engine Design and Debug

This insightful blog series takes you step-by-step through an AI Engine design flow. From launching Vitis tools, to designing your first AIE kernel with design graphs, to simulation, debug, and running in real hardware.

Documentation

Versal ACAP Design Hub: AI Engines

The Versal™ ACAP design hub is a new streamlined option to navigate Versal ACAP documentation based on your design phase, where you can learn more about the AI Engine technology and design flows.

Explore Versal ACAP Design Hub / AI Engine Development