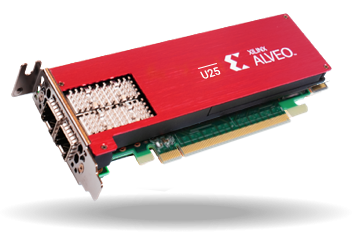

Alveo U25 SmartNIC

- Status: Discontinued

Product Description

For cloud architects building modern data centers, the Alveo U25 provides a comprehensive SmartNIC Platform that brings true convergence of network, storage, and compute acceleration functions onto a single platform.

The U25 SmartNIC Platform is based on a powerful FPGA, enabling hardware acceleration and offload to happen inline with maximum efficiency while avoiding unnecessary data movements and CPU processing. The U25 programming model supports both high-level network programming abstractions such as HLS and P4, as well compute acceleration frameworks such as Vitis™ to enable both AMD and 3rd party accelerated applications.

Key Features & Benefits

A Powerful SmartNIC

The U25 delivers ultra-high throughput, small packet performance and low-latency. The host interface supports standard NIC drivers as well as Onload™ kernel bypass to provide both TCP and packet-based APIs for network application acceleration. IEEE1588v2 precision timing protocol (PTP) is provided for applications that require synchronized time stamping of packets with single-digit nanosecond accuracy.

A Programmable Fabric

The U25 SmartNIC contains a programmable FPGA handling all network flows. Each flow can be individually delivered to the host and/or streamed in hardware to through bump-in-the-wire network acceleration functions and/or compute acceleration kernels for application processing within the FPGA.

A Platform for Hardware Accelerated Clouds

Cloud service providers are deploying SmartNIC fabrics to achieve virtual switching and micro- segmentation of services that scale linearly with CPU cores and network links. The U25 is a platform for the industry’s first converged SmartNIC fabric, including shrink-wrapped applications.

Card Specifications

For full product specifications refer to the Product Brief.

| Board Specifications | Alveo U25 SmartNIC |

|---|---|

| U25 | |

| Compute Resources | |

| Look-up Tables (LUTs) | 522K |

| Dimensions | |

| Height | 2.54 inch (64.4 mm) |

| Length | 6.60 inch (167.65 mm) |

| Width | Single x16 PCIe Slot |

| On-board Memory | |

| DDR | - 1x 2GB x 40 DDR4-2400 - 1x 4GB x 72 DDR4-2400 |

| Interfaces | |

| PCI Express | Gen3 x16, bifurcated to 2x Gen3 x8 depending on use case |

| Network Interfaces | 2x SFP28 |

| Time Stamp | |

| Hardware Time Stamping | Yes (1588) |

| Networking | |

| Stateless Offloads | Yes |

| Tunneling Offloads | VXLAN / Geneve |

| SR-IOV | Yes |

| Advanced Packet Filtering | Yes |

| Acceleration | DPDK, Onload™ |

| Manageability Support | |

| PXE and UEFI boot support | Yes |

| Power and Thermal | |

| Maximum Total Power | 75W |

| Thermal Cooling | Passive |

| Tool Support | |

| Vitis Developer Environment | Yes |

| Vivado Design Suite | Yes |

Onload™ - Application Acceleration Software

Onload™ dramatically accelerates and scales network-intensive applications such as in-memory databases, software load balancers, and web servers. With Onload, data centers can support 400% or more users on their cloud network while delivering improved reliability, enhanced quality of service (QoS) and higher return on investment, without modification to existing applications.

Onload allows data centers to realize:

- Improved elasticity and efficiency, leading to a lower total cost of ownership (TCO)

- Increased peak transaction rates, eliminating service brownouts

- Reduced network jitter with greater response times, equating to superior quality of service

Features:

- Add 400% or more users

- Improve messagerates by 100%

- Minimize server footprint 25%

- Reduce Latency by 50%

- Experience near zero jitter

- Maxmize capex & opex

- Enhance quality of service

Extending Data Center Investments

Onload delivers a return on capex investments by allowing data centers to redeploy 25% or more of their load-balancing servers for other tasks. Alternatively, data centers can attain a reduction in operational expenses (opex) by shrinking the overall server footprint.

| Use Case | Application(s) | Performance Increase | Benchmark Documents |

|---|---|---|---|

| In-Memory Databases | Couchbase, Memcached, Redis | 100% | |

| Software Load Balancers | NGINX Plus, HAProxy | 400% | |

| Web Servers/ Applications | NGINX Plus, Netty.io | 50% |

How Fast Can Your Applications Go?

Onload accelerates nearly all network-intensive TCP-based applications. Typical performance improvements when utilizing Onload include:

AMD has produced a series of cookbooks that outline the servers we used, the configuration done, and exactly what testing was completed. The purpose of these cookbooks is to enable customers to reproduce the results obtained, as sometimes they can appear somewhat remarkable.

To obtain a copy, contact us.

Ensuring Uptime and Network Compatibility

Onload is built from the same I/O software technology that powers nearly every financial market and high-frequency trading application on the planet. POSIX compliant, Onload ensures compatibility with TCP-based applications, management tools, and network infrastructures. In addition, Onload provides RDMA-like performance without requiring a forklift upgrade to the data center’s network infrastructure and can be deployed across x86-based platforms running Linux – bare metal, virtual machine or container.